Note

Start from an overview on huggingface about how to generate tokens with llm.

Logits Processer

top_p

Top_p keeps the first few most probable candidates that the sum of their probabilities exceeds top_p.

The rest are masked with a filter value such as NaN so that they don’t get picked.

top_k

Top_k simply keeps the top_k most probable candidates, i.e, the k tokens with the highest output score.

The rest are masked with a filter value such as NaN so that they don’t get picked after softmax layer.

Parameters

temperature

Temperature is applied by dividing by temperature right before softmax. Higher temperature leads to more

diversed output. However, do note that temperature does not change the magnitude orders of the probabilities,

hence zero impact on decoding when do_sample=False.

class TemperatureLogitsWarper(LogitsProcessor):

def __init__(self, temperature: float):

if not isinstance(temperature, float) or not (temperature > 0):

except_msg = (

f"`temperature` (={temperature}) has to be a strictly positive float, otherwise your next token "

"scores will be invalid."

)

if isinstance(temperature, float) and temperature == 0.0:

except_msg += " If you're looking for greedy decoding strategies, set `do_sample=False`."

raise ValueError(except_msg)

self.temperature = temperature

def __call__(self, input_ids: torch.LongTensor, scores: torch.FloatTensor) -> torch.FloatTensor:

scores_processed = scores / self.temperature

return scores_processedrepetition_penalty

Tokens that already occur, their probabilities got scaled down by dividing by self.penalty.

num_return_sequences

For every prefix/prompt, output multiple independent results, i.e.,

return_dict_in_generate

The main switch for returning all intermediate results.

Different Decoding Strategies

greedy search

The model selects the token with the highest conditional probability in each step during generation.

beam search

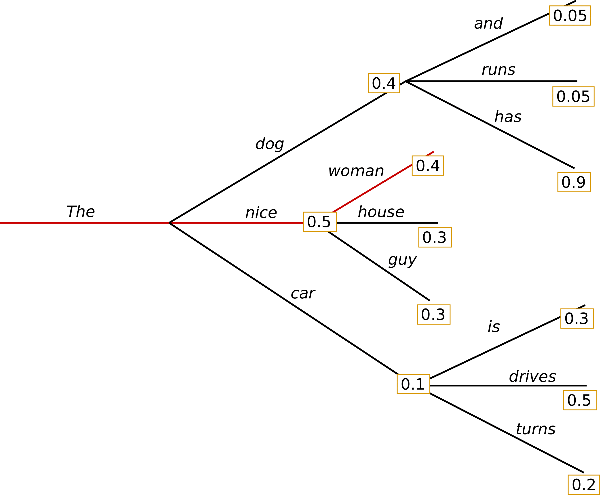

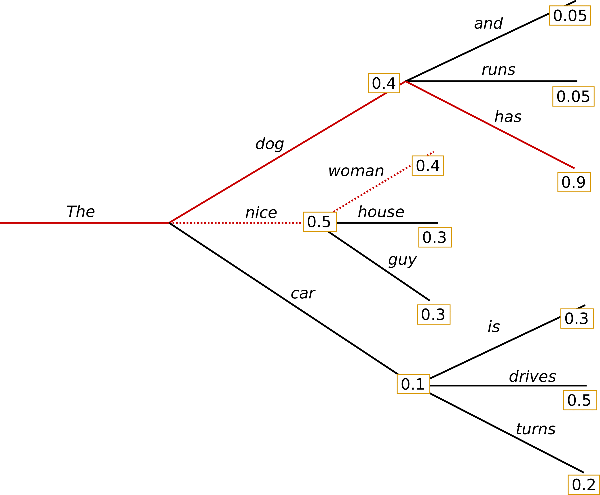

In each time step, k most probable candidates are kept to the next time step where the model generates the next token for k different sequences, after which the most probable sequence overall is chosen.

As illustrated from the graph above, in time step 1, the sequence (“The”, “dog”) and (“The”, “nice”) are reserved. In time step 2, the model generates next token for both sequences and the new sequence (“The”, “dog”, “has”) is selected because its overall probability is higher: 0.4*0.9 > 0.5 * 0.4.

Tip

- The scores are cumulative probabilities, in practice log probabilities are used because additions are cheaper than multiplications from the perspective of compute cost.

- In each step,

1 + #eos_toktokens are kept so that we have at least one non-eos token per beam.

constrained beam search

In open-ended generation, a couple of reasons have been brought forward why beam search might not be the best possible option:

- Beam search can work very well in tasks where the length of the desired generation is more or less predictable as in machine translation or summarization - see Murray et al. (2018) and Yang et al. (2018). But this is not the case for open-ended generation where the desired output length can vary greatly, e.g. dialog and story generation.

- We have seen that beam search heavily suffers from repetitive generation. This is especially hard to control with n-gram- or other penalties in story generation since finding a good trade-off between inhibiting repetition and repeating cycles of identical n-grams requires a lot of finetuning.

- As argued in Ari Holtzman et al. (2019), high quality human language does not follow a distribution of high probability next words. In other words, as humans, we want generated text to surprise us and not to be boring/predictable. The authors show this nicely by plotting the probability, a model would give to human text vs. what beam search does.

Quantization

An example to load a model in 4bit using NF4 quantization below with double quantization with the compute dtype bfloat16 for faster training:

from transformers import BitsAndBytesConfig

nf4_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_use_double_quant=True,

bnb_4bit_compute_dtype=torch.bfloat16

)

model_nf4 = AutoModelForCausalLM.from_pretrained(model_id, quantization_config=nf4_config)sampling

stopping criteria

from transformers import AutoTokenizer, AutoModelForCausalLM, \

GenerationConfig, BitsAndBytesConfig, StoppingCriteria, \

TextStreamer, pipeline

import torch

class GenerateSqlStoppingCriteria(StoppingCriteria):

def __call__(self, input_ids, scores, **kwargs):

# stops when sequence "```\n" is generated

# Baichuan2 tokenizer

# ``` -> 84

# \n -> 5

return (

len(input_ids[0]) > 1

and input_ids[0][-1] == 5

and input_ids[0][-2] == 84

)

def __len__(self):

return 1

def __iter__(self):

yield self

model_id = "baichuan-inc/Baichuan2-13B-chat"

tokenizer = AutoTokenizer.from_pretrained(

model_id,

use_fast=False,

trust_remote_code=True,

revision="v2.0"

)

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.bfloat16,

)

model = AutoModelForCausalLM.from_pretrained(

model_id,

device_map="auto",

quantization_config=quantization_config,

trust_remote_code=True,

)

model.generation_config = GenerationConfig.from_pretrained(model_id, revision="v2.0")

streamer = TextStreamer(tokenizer, skip_prompt=True,)

pipeline = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

revision="v2.0",

do_sample=False,

num_return_sequences=1,

eos_token_id=tokenizer.eos_token_id,

stopping_criteria=GenerateSqlStoppingCriteria(),

streamer=streamer,

)Speed Up Generation

speculative decoding

In speculative decoding, a small but competent draft model is used to start generating tokens. The base model(big one) is used to examine the output tokens. Tokens are accepted according to the log probabilities. The base model is then used again to actually generate tokens starting from the rejected token. It can be mathematically proved that the probability distribution is the same as if it was just the base model the whole time. Hence no performance loss while a significant speed up in generation is obtained.

Recent approaches include LLMLingua which speeds up inference by compressing prompt and KV cache with minimal performance loss, and Medusa which achieves the same performance as speculative sample by attaching and training multiple medusa heads, hence eliminating the need for small yet competent draft models. Note that Medusa indeed requires extra training.